poison in the corpus: the antidote in sight

Last week I attended data:unplugged in Muenster, a very commercial event that raised more questions than it answered, but that did make me think: at the end of his keynote, Sascha Lobo said something that stuck with me: if I understood him correctly, he argued that we need a positive vision for a society with ai, because if we don't develop one, doom is certain

I think Sascha Lobo is right. It's all too easy to envision a hundred ways the ai revolution can go wrong. Mass unemployment. Autonomous warfare. Total surveillance. The dystopian possibilities are obvious, none of them unrealistic, some already underway.

But I want to pick up Sascha Lobo's call and talk about an area where ai can genuinely improve humanity and society, and which, as I'll argue by the end, represents a necessary step in the development of artificial intelligence itself.

the unfinished project

Two weeks ago, Juergen Habermas died. You might think that's news for the feuilletons, for philosophy seminars, for people who enjoy discussing discourse ethics. But since my classicist father would certainly have insisted on the philosophical context, I want to briefly bring this perspective into my discourse:

In 1784, Immanuel Kant posed the question of what enlightenment is, and gave an answer that still holds:

"Aufklaerung ist der Ausgang des Menschen aus seiner selbstverschuldeten Unmuendigkeit."

("Enlightenment is man's emergence from his self-imposed immaturity.")

— Immanuel Kant, 1784 [13]

Sapere aude: have the courage to use your own understanding. This was not an academic appeal. It was a political program: reason, not authority, as the foundation of human coexistence. And science as the institutionalized practice of that reason.

In 1934, Karl Popper supplied the missing hinge between enlightenment and scientific practice [19]: it's not what can be proven that makes a theory scientific, but what can be refuted. Falsifiability as a demarcation criterion. Eleven years later, under the impression of two world wars, he drew the arc to politics in The Open Society and Its Enemies [20]:

"The secret of intellectual excellence is the spirit of criticism; it is intellectual independence."

— Karl Popper, 1945 [20]

Where criticism is suppressed, in politics as in science, closure takes hold. And closure is the beginning of the end.

Habermas took this thought further and followed it to its political conclusion: rational discourse, the evidence-based exchange of arguments in the public sphere, is the foundation on which democratic societies ground their legitimacy [14]. Not power. Not tradition. Not belief. But the shared commitment to reason. He called the enlightenment an "unvollendetes Projekt der Moderne" (an unfinished project of modernity): not failed, but not yet fulfilled.

Why am I telling this in a text about ai and science?

Because if Kant, Popper, and Habermas are right, science is not simply a method. It is the foundation on which free societies stand. If that foundation becomes fragile, this is not a parochial problem for academics to sort out among themselves. Then something is at stake that is larger than any single study, any single result, any single discipline.

science is broken

A large portion of what we call science no longer deserves the name.

Yuval Noah Harari argues in Sapiens that science was never independent:

"Most scientific studies are funded because somebody believes they can help attain some political, economic or religious goal."

— Yuval Noah Harari, Sapiens [1]

I think that's true. It was always like this. Research was always intertwined with power and money.

But what has happened over the past thirty years goes beyond this historical entanglement. The economization of science introduced false success indicators into the scientific enterprise, creating perverse incentives that have poisoned the corpus of scientific knowledge over the years. At some point, and it would be worthwhile research to determine exactly when, someone decided that the number of published papers was a meaningful indicator of scientific performance.

In my view, this was perhaps the most consequential mistake in the history of science.

Publish or perish. Those who do honest research, carefully, slowly, with negative results that nobody wants to publish, risk their careers. Gregor Mendel counted peas for eight years [18]. Twenty-eight thousand plants, meticulously crossbred and documented, to then publish a single paper: "Versuche ueber Pflanzen-Hybriden" (Experiments on Plant Hybridization), 1866. It was ignored. For thirty-four years. It wasn't until 1900 that de Vries, Correns, and von Tschermak independently discovered that Mendel had laid the foundations of modern genetics. Today, Mendel would lose his position after two years without a publication.

And so the rule today is: whoever publishes positive results quickly and churns out as many highly citable papers as possible becomes a professor, or at least gets another fixed-term contract as a research associate.

Add to this the attention economy, in which scientists increasingly present themselves like influencers. Sensational titles get more clicks. Surprising results get cited more often. Nuanced papers with careful conclusions disappear in the noise. All of this in a highly polarized and politicized environment where, as Harari described, power deliberately uses science for its own interests.

This system is carried and sustained by the "academic" publishers, whose motivations and economic optimization differ little from other forms of publishing. Elsevier alone generates over two billion euros in annual revenue [2] from this model.

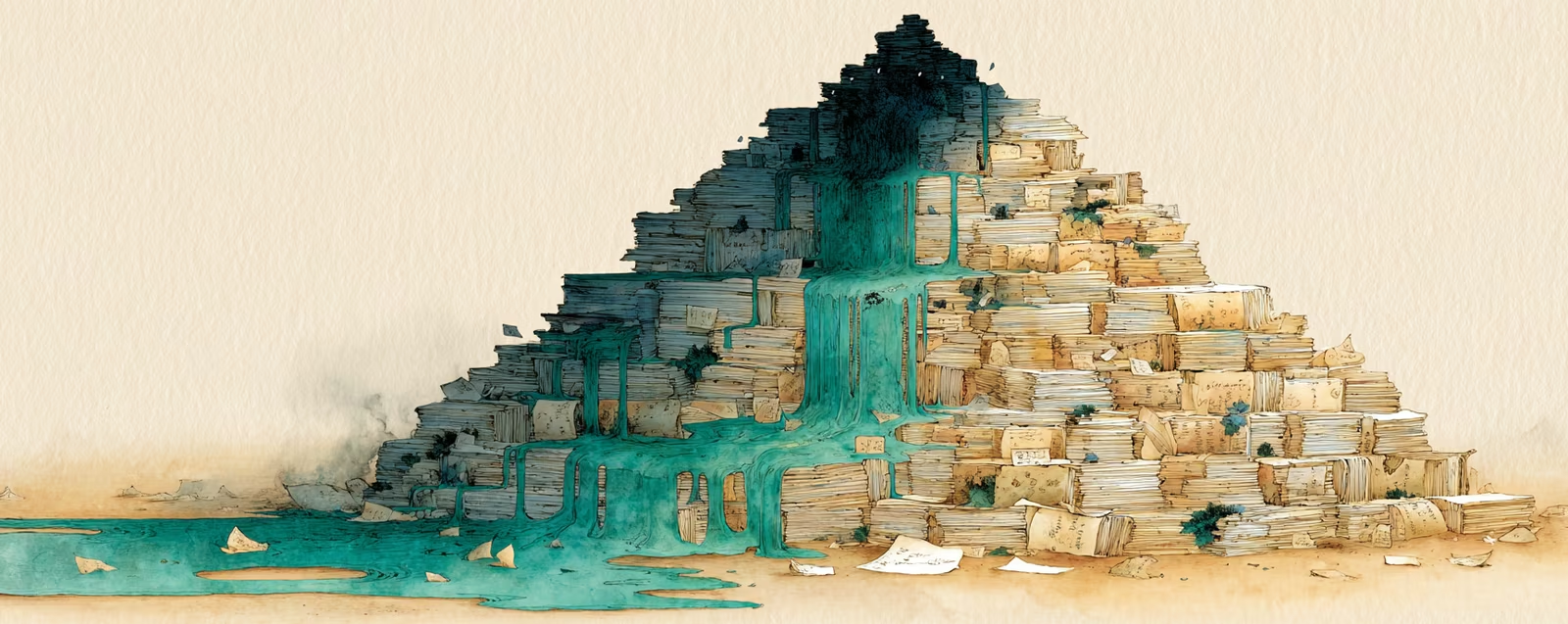

The result: things are being mass-produced that look like science. With abstracts. With methodology. With peer review and impact factors. The form is there, but the substance is at best hollow, all too often dangerous. And each individual scientist is invited to think that their little publication wouldn't matter in the unfathomable mass of scientific-looking material, while the scientific corpus has been poisoned piece by piece over the years.

And trust is eroding with it. Since the covid crisis at the latest, it has become visible to everyone that scientific discourse is increasingly becoming a battleground for ideological conflicts. The Edelman Trust Barometer 2024 shows that a clear majority of respondents express a "suspicion of science's independence from politics and money" [4]. People sense that something is wrong. They can't precisely articulate it. But the distrust is there. And it is justified.

If you think that's an exaggeration, I recommend looking at the numbers. In 2015, the Open Science Collaboration project attempted to replicate one hundred psychological studies. One hundred studies, all published, all peer-reviewed, all in respected journals. The result: fewer than half could be replicated [5]. But that was just the beginning. The Many Labs projects followed up systematically: Many Labs 2 attempted to replicate twenty-eight studies, only half succeeded. Many Labs 5: two out of ten [16]. And then came DARPA. The US Department of Defense, which partly bases its strategic decisions on social science research, wanted to know which knowledge could still be trusted. The SCORE program [15] extracted over seven thousand scientific claims from eight disciplines and systematically assessed their robustness. The result for psychology and education: a predicted replication rate of forty-two percent. Personally, I think that's still optimistic.

When the United States Department of Defense starts auditing the robustness of social science, that should give you pause.

Medicine is no better off. John Ioannidis published a paper in 2005 titled "Why Most Published Research Findings Are False" [6]. It has been cited thousands of times since. And the structure that produced this problem is in excellent health twenty years later. It has even intensified, not least because the incentivized increased academization of society directly raises the number of people in the system who must produce papers without genuine research interest, resources, or sufficient time.

what would help

OK, enough complaining: my boss would already be rolling her eyes because I've been dwelling on the problem for too long. Time to be constructive:

Three voices approaching a common point from entirely different directions:

The first step, and the most obvious one, is systematic falsification: Popper's program, scaled to the entire scientific corpus. In my own academic work, I came across Michele B. Nuijten years ago, who built statcheck [7], a tool that automatically recalculates reported test statistics in scientific papers. When she applied it in 2015 to over two hundred and fifty thousand p-values from psychological journals, she found at least one statistical inconsistency in roughly half of all papers, and in every eighth one, an inconsistency that calls the result into question [7]. The problem: statcheck was classically scripted and therefore limited to standardized reported statistics.

LLMs open the possibility of extending this idea toward argumentation, methodology, and substantive consistency. I envision a quality rating that works independently of the broken peer review system. Not assigned by two overworked reviewers who have no interest in publishing results that contradict their own views, but computed through a systematic analysis of methodology, data availability, and statistical robustness, independent of consistency with the existing knowledge corpus.

no human can do this. ai can.

The second step is replication. What cannot be replicated is worthless. This is not a radical thesis, it's the foundational principle of the scientific method, and it's a principle we have so thoroughly ignored that one must ask whether we ever meant it. Studies without accessible raw data are published. Length restrictions force authors to cut details essential for replication. And replication studies themselves are barely conducted because they don't build careers and don't fill journals.

This month, Andrej Karpathy demonstrated with AutoResearch [8] what an alternative could look like: an ai agent that forms hypotheses, writes code, runs experiments, and evaluates the results. Twelve experiments per hour. Over a hundred per night. Karpathy himself ran seven hundred experiments in two days and found twenty optimizations that improved his model by eleven percent, a model he had previously worked on by hand for months [9].

"The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement."

— Andrej Karpathy [8]

That's formulated for ml training. But the principle, in my view, is transferable.

The third step is the one that fascinates me most: securing the knowledge base. An ai system that can read, understand, and cross-reference the entire scientific corpus of a discipline would be able to do something no human and no team of humans has ever been able to do. It would find contradictions. Not the obvious ones that humans also notice, but the subtle inconsistencies between studies that were never read side by side because they were published in different subdisciplines, different journals, different decades.

Thomas Kuhn described in The Structure of Scientific Revolutions [21] exactly how this works: in every discipline there are theories that are logically incompatible but coexist because the scientific community works within a paradigm and does not question its basic assumptions, even as anomalies accumulate. Resolution comes only through a paradigm shift, and that is rarely voluntary.

Why?

why we tolerate contradictions

In 1957, Leon Festinger described the concept of cognitive dissonance [11]: humans experience contradictions in their worldview as so uncomfortable that they actively develop strategies to avoid them. Not to resolve them. To avoid them. We ignore contradictory evidence. We rationalize inconsistent beliefs. We avoid information that threatens our worldview.

this is not a bug. it's a feature.

A coherent but false model of the world is, in my view, more actionable than an inconsistent but partially correct one. Someone on the savanna who must quickly decide whether a shadow is a predator benefits more from an internally consistent, if occasionally wrong, model than from a more accurate model that paralyzes them with nuance. The algorithm of darwinian evolution did not optimize us for truth. It optimized us for agency.

And that is precisely why the human knowledge corpus is full of unresolved contradictions. Not because we couldn't see them. But because it feels better to ignore them than to deliberately design experiments that could resolve them.

why this is a problem for ai

Elon Musk argues in the Dwarkesh Podcast that truth-seeking is absolutely fundamental:

"You can't understand the universe if you're delusional."

— Elon Musk [10]

And he draws an interesting parallel. He talks about HAL 9000, Kubrick's ai that goes insane. In Arthur C. Clarke's novel, written in parallel with the film, the cause is explicitly stated: HAL receives two directives that cannot be simultaneously fulfilled, to successfully carry out the mission and simultaneously conceal its true purpose from the crew [17]. Clarke calls the result a "Hofstadter-Moebius loop": an unresolvable logical loop that leads to system failure.

Musk frames this as a design principle for Grok:

"Axioms as close to true as possible, no contradictory axioms, conclusions that necessarily follow."

— Elon Musk [10]

But the argument is bigger than Grok. If an ai is trained on a knowledge corpus full of contradictions, it cannot form a coherent world model. It will reproduce exactly the same inconsistencies that exist in the training material.

This is not merely an alignment principle. It is a functional prerequisite for intelligence.

And with that, the relationship between ai and science becomes bidirectional. Science needs ai to clean up its own mess. And ai needs clean science to become intelligent. An ai can only be as smart as the knowledge corpus it's based on is consistent. That is Musk's real argument for his "truth-seeking core": not ethics, not political correctness, but functional necessity.

the circle

Now I hear the objection: doesn't ai make the problem worse first? Ai-generated papers, synthetic data, a flood of machine-produced texts that amplify the noise before the filters can catch up. The objection is valid. And it's already reality: the first ai-generated papers have appeared in peer-reviewed journals, and the tools that produce bad science are cheaper and faster than the ones that detect it.

But that is precisely the argument for, not against, the development described here. When the flood rises, you need better filters, not the abandonment of filters. And the filters we have haven't worked for a long time.

Karpathy described the concept of "verifiability" as the central feature of the new ai paradigm in a blog post [12]. If something is verifiable, it can be optimized. If not, it's noise. That sounds like a technical observation. It's an epistemological one.

Science that is replicable is verifiable. Science that is verifiable can be improved by ai. And better science makes the ai that is trained on it better, which in turn makes the ai better at auditing and improving science.

This is not linear progress. This is a self-reinforcing cycle. And it has already begun.

The sum of truly secured human knowledge, that which actually has an effect and is replicable, is shockingly small. But that is exactly the point: the value is not the mass of published material, but the core that withstands scrutiny. Ai can identify, secure, and expand that core.

Not in a hundred years. Now.

The scientific enterprise faces a sudden shock that will call entire disciplines into question. Studies that are not replicable. Theories that contradict each other. Entire branches of research standing on foundations that cannot withstand systematic scrutiny. Psychology has had a foretaste. But psychology was also one of the few disciplines that has repeatedly, throughout its history, voluntarily submitted to this cleansing examination.

The result will be painful. It will take decades to heal these wounds. In medicine. In psychology. In the social sciences. But the alternative, continuing to build on a foundation we know is fragile, is worse.

It's easy to see a hundred ways ai can go wrong. This is one way it can go right.

translated from the german original by claude opus 4.6how did you like this post?